Top 10 AI and Compliance Interview Questions You Should Be Ready For

Written by Matthew Hale

Whereas most domains have been blocked by artificial intelligence (AI) applications along the lines of transformation within organizations, compliance remains one of the most significant areas of collision with regulation and ethics.

With organizations of all sizes using AI across their businesses- such as in decision-making, risk management, customer engagement, and process automation- there is now an increased demand for compliance professionals to understand operational processes and handle risks associated with AI systems.

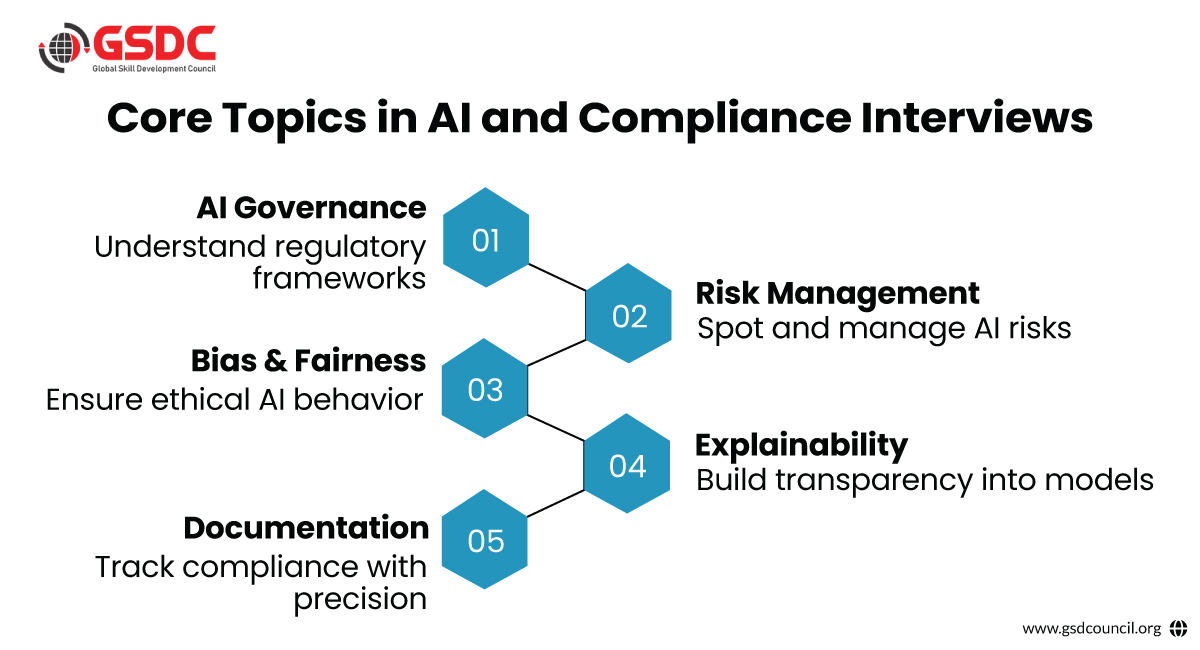

We explore the top 10 most relevant interview questions related to AI and compliance. These questions are designed to assess your understanding of legal, ethical, and governance issues in AI, and how artificial intelligence can be used in compliance while staying aligned with global standards.

Understanding how to implement an effective AI compliance framework and integrate AI in risk and compliance strategies is becoming critical across regulated industries.

Top 10 AI and Compliance Interview Questions

1. What are the key regulatory frameworks and guidelines that govern AI, and how would you ensure our AI systems comply with them?

This foundational question tests your awareness of key laws and frameworks that shape the landscape of AI in risk and compliance.

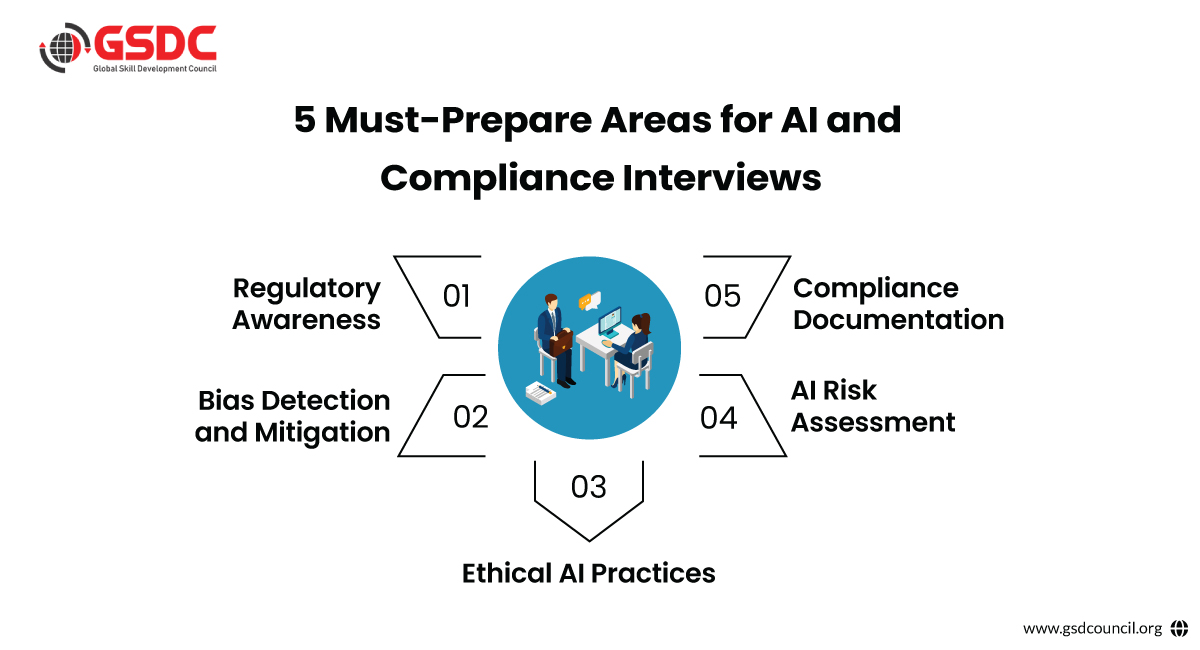

Among other things, you will need to be able to discuss the EU General Data Protection Regulation (GDPR), which lays the groundwork for automated decision-making as well as data protection by design.

You will also need to be in the know about the forthcoming EU AI Act, which provides a risk-based framework and subsequently establishes an index for the classification of AI systems according to their potential harm to individuals and society. (HBR.org).

In the U.S., the AI Bill of Rights provides ethical guidance, emphasizing fairness, transparency, privacy, and accountability.

Operationalizing these laws means conducting privacy impact assessments, documenting AI lifecycle processes, ensuring human oversight, and keeping policies up to date as regulations evolve (IBM.com). These steps are vital components of a strong AI compliance framework.

Certifications from organizations like GSDC can further validate your expertise in AI and compliance and help you to stand out.

2. What unique compliance challenges do AI development and deployment present, and how would you address them?

AI introduces novel complexities, including dealing with large datasets (often personal or sensitive), potential algorithmic bias, lack of explainability, and data security issues.

The "black box" nature of many models makes auditing difficult, which complicates compliance.

Your answer should demonstrate awareness of these challenges and offer strategies like data minimization, consent mechanisms, regular audits, and use of interpretable models.

Highlight the importance of continuous validation and a multidisciplinary approach involving legal, technical, and ethical perspectives (Dialzara.com). These actions are central to ensuring effective AI in risk and compliance processes.

3. How would you identify and mitigate bias in an AI model to ensure it makes fair decisions?

Since AI adopts and embodies human prejudices and biases, discriminatory results therefrom will, in turn, constitute an ethical and legal issue under various laws, such as the GDPR.

The interviewers want confirmation that you are able to examine training data for fairness, implement fairness metrics, and know the implications of disparate impact.

Effective mitigation strategies might include:

- Rebalancing biased datasets

- Using algorithmic fairness tools (e.g., IBM’s AI Fairness 360)

- Including diverse test groups during validation

- Regularly updating models to reflect changing populations

This reflects your ability to implement a robust AI compliance framework that ensures legal and ethical integrity (Shrutikp.medium.com).

4. Why is explainability important in AI, and how do you ensure AI decisions are transparent to stakeholders?

Poor explainability is at the heart of the compliance issues involving AI. In regulated sectors like finance or healthcare, it is necessary for users and regulators to know how automated systems come to decisions.

Your answer should highlight:

- Use of interpretable models when possible

- Tools like SHAP, LIME, and model cards

- Implementation of meaningful explanations tailored to users

- The role of explainability in trust, auditability, and regulatory adherence

The EU AI Act emphasizes explainability as a requirement for high-risk AI applications, and GDPR grants individuals the right to receive explanations about automated decisions (Dialzara.com).

For professionals aiming to deepen their expertise, the Generative AI in Risk and Compliance certification offers cutting-edge insights and practical frameworks to navigate the evolving regulatory landscape with confidence.

5. How would you approach risk management and auditing for AI systems?

This question tests your understanding of lifecycle oversight in AI. Start by describing a risk-based approach: identify privacy, ethical, operational, and security risks at each phase—from data collection to deployment.

Include strategies such as:

- Regular audits and compliance checkpoints

- Documentation of training data and model performance

- Use of monitoring tools to detect drift or anomalies

- Aligning practices with recognized standards (e.g., NIST AI Risk Management Framework)

Explain how you would embed these processes into governance structures to make AI auditable and compliant by design (Dialzara.com).

6. What steps would you take to develop and enforce a Responsible AI use policy within our organization?

An effective AI policy development begins by identifying the core values- fairness, transparency, accountability, and privacy. Interviewers will be interested in people who move from theory to implementation.

Your strategy should include:

- Building a cross-functional AI governance committee

- Defining ethical principles and acceptable AI use cases

- Establishing internal review processes for model approval

- Integrating checkpoints (e.g., bias testing, data review) into development workflows

- Providing organization-wide training on AI compliance

Explain how the policy will evolve with emerging regulations and serve as the foundation for your AI compliance framework (Dialzara.com).

7. What ethical considerations do you evaluate when AI systems make decisions, and how do you address potential ethical conflicts?

When it comes to ethics and compliance, both are closely interlinked. Candidates must show that they can imagine the dilemmas brought about when legal obligations, organizational goals, and public expectations do not align.

Key considerations include:

- Avoiding harm

- Promoting fairness

- Preserving autonomy (e.g., allowing opt-outs or human appeal mechanisms)

- Preventing misuse or overreach

Illustrate how you use ethical review boards or internal audits to address these conflicts and ensure that decisions align with both the law and organizational values (IBM.com).

8. What kinds of documentation and reporting would you produce to demonstrate AI compliance?

Documentation remains the answer to rendering compliance and accountability strong. Strong candidates will show a systematic approach to record management and accountability.

Examples include:

- Model cards (describing model intent, limitations, and metrics)

- Datasheets for datasets (providing details on origin, composition, and bias reviews)

- Logs of decision outcomes and incident tracking

- Regulatory compliance reports (e.g., GDPR, AI Act readiness)

Explain how you’d use this documentation to support audits, stakeholder communication, and legal inquiries (Dialzara.com).

9. How do you balance the drive for AI innovation with the need to meet regulatory compliance and ethical standards?

This question is inquiring whether you consider compliance as an enabler rather than a constrictor towards innovation. Your answer must indicate a compliance-by-design outlook in thinking.

Highlight strategies like:

- Embedding risk reviews into agile development workflows

- Collaborating early with legal and compliance teams

- Designing sandbox environments for ethical experimentation

- Communicating the long-term value of responsible AI adoption

As AI becomes more regulated, organizations need leaders who can build agile yet accountable systems that fulfill the promise of AI while respecting boundaries (IBM.com).

10. How do you stay updated with the rapidly evolving landscape of AI regulations and guidelines?

AI regulation is evolving quickly, and professionals must stay informed. A solid answer includes:

- Following regulatory bodies (e.g., EU Commission, NIST, ISO/IEC)

- Subscribing to AI policy newsletters or legal briefings

- Participating in compliance webinars and conferences

- Joining communities focused on AI governance

- Contributing to internal knowledge sharing

Demonstrating a proactive learning approach shows employers you’ll help keep them on the cutting edge of both innovation and compliance (Hirevire.com).

Get Interview-Ready for the Future of Ethical AI and Compliance. 3 Reasons to Download This Guide: Master Real Interview QuestionsDownload the checklist for the following benefits:

Stay Ahead with Industry-Aligned Frameworks

Stand Out as a Responsible AI Leader

Conclusion

Organizations continue to deploy AI into an ever more sensitive hiring process, lending decisions, diagnostics, and risk modeling- creating a growing demand for competencies at the intersection of AI and compliance.

The ten interview questions that follow reflect core knowledge areas needed to lead in this space.

Fairness, explainability, documentation, and regulatory frameworks are simply some of the topics you should be able to discuss with confidence when pursuing a career in AI governance, risk, or legal compliance that demonstrates preparation to lead responsible innovation.

As artificial intelligence is used to regulate compliance more and more, organizations will need leaders who understand how to adapt to regulations and start to carve a pathway for a safer and more ethical AI future.

By attending these interviews, showing fluency in ethical design principles, a sound basis of the AIl compliance landscape, and commitment to remain informed would set you apart from other compliance professionals as the one ready to embrace the AI era.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!